Glad I'm not a coder then, I guess.

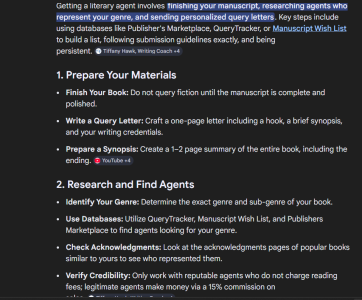

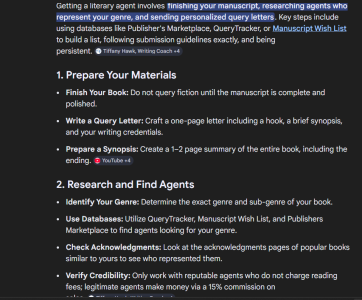

I was mentioning this in another thread earlier, but the AI summary function of search engines appears to be obviating the websites they are referencing. I was noticing the other day that I haven't had to click on a website in what feels like months because the summary function has answered everything I've needed. Granted it was all stupid stuff that didn't need vetting or independent confirmation. But in using our jam as an example, let's say we're trying to attract new members who might, say, type "how do I get a literary agent?" How many read the summary and stop there without clicking on a link to a website that might be providing the info in the first place. This might be a bad example because there's a lot more nuance and follow up to that particular question, but you get the idea. When you get an answer like this, how many people keep going:

I was mentioning this in another thread earlier, but the AI summary function of search engines appears to be obviating the websites they are referencing. I was noticing the other day that I haven't had to click on a website in what feels like months because the summary function has answered everything I've needed. Granted it was all stupid stuff that didn't need vetting or independent confirmation. But in using our jam as an example, let's say we're trying to attract new members who might, say, type "how do I get a literary agent?" How many read the summary and stop there without clicking on a link to a website that might be providing the info in the first place. This might be a bad example because there's a lot more nuance and follow up to that particular question, but you get the idea. When you get an answer like this, how many people keep going: