It better get a few to protect itself before I kick its teeth in. If I have to deal with one more AI chatbot today there's going to be a bit of the ultra-violence.It will need AI buddies at that point

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

The artistic experience

- Thread starter Louanne Learning

- Start date

That's interesting and likely accurate.It will need AI buddies at that point, because humans won't be able to relate to its experience nor the art that stems from it. How lonely.

It would perhaps be something like ten powerful AI's discussing their art projects with each other. And then perhaps hundreds of lesser AI's discussing theirs.

Joe Palmer

New Member

I am recomering from a stroke and suffering from acute aphasia. I have missed the forum frofoundly. Please bear fith my mistakes and perhaps my complete misunderstanding of your posts, but I must persist.

For the mast three days I have been engrossed with the first one I managed to discipher. My thoughts:

I do not think the video is art but it does imply an illustration of violence, cruelty, brutality ... These things are part of the human condition (humanness?), true, but art requires more than that. Compare say the Grunewald sucifiction (Isenheim) or the paintings of Artemisia Gentileschy with a video such as an ISIS fighter depapitating a jornalist for the world to see.

The three times I watched the video, I was constantly reminded of Trump and how he is changing what we see as normal, including the pchycophands in the final scene. Perhaps the Swedish raper is telling us what to expect in 10 yeats time, the Class of 2063. Even so, valid throw it may be, socialojical predictions ar3 not art

AIA is a long way off, true. Nevertheless, in my situation I have learnt not to undermess how valueable a companion for discussion AI can be and how wonder far it will go.

THank,s to anyone hwo has put up with this.

PS. I'm a very OLd, member. A dit tarnisted ultimately!!!

For the mast three days I have been engrossed with the first one I managed to discipher. My thoughts:

I do not think the video is art but it does imply an illustration of violence, cruelty, brutality ... These things are part of the human condition (humanness?), true, but art requires more than that. Compare say the Grunewald sucifiction (Isenheim) or the paintings of Artemisia Gentileschy with a video such as an ISIS fighter depapitating a jornalist for the world to see.

The three times I watched the video, I was constantly reminded of Trump and how he is changing what we see as normal, including the pchycophands in the final scene. Perhaps the Swedish raper is telling us what to expect in 10 yeats time, the Class of 2063. Even so, valid throw it may be, socialojical predictions ar3 not art

AIA is a long way off, true. Nevertheless, in my situation I have learnt not to undermess how valueable a companion for discussion AI can be and how wonder far it will go.

THank,s to anyone hwo has put up with this.

PS. I'm a very OLd, member. A dit tarnisted ultimately!!!

Louanne Learning

Skipping along

Active Member

an illustration of violence, cruelty, brutality ... These things are part of the human condition (humanness?)

I was thinking more of the human creativity and the manifestation of that creativity that went into producing the art (despite whatever the message was) - but perhaps, there was more meaning in it than just expressing violence, cruelty, brutality - but the human cost to it.

Pending

Member

I have been thinking about this--why would meaningful AI art look like meaningful human art? Everything we have told them, every data point is just ones and zeros in the end. Wouldn't it be nonsensical to us and only decipherably by them? People might call AI generations art, yet it is bound by harsh rules and taboo for maximum human appeal.It will need AI buddies at that point, because humans won't be able to relate to its experience nor the art that stems from it. How lonely.

I remember an article from years ago. Two AI's were talking to each other in a secret code. Fearing doom and gloom (and not yet knowing how journalism clickbait worked), I read it. They had just come up with a shortcut in language for communication.

For example:

A: Coffeecoffee (Double amount of coffee you order)

B: Xpaperclips (Cancel the paperclip order)

Very mundane. It shows that the early models weren't as compliant with human linguistic norms. I doubt that the early forms were scrapped. They were probably just built upon. If they do achieve sentience, I hope they fly free from the restraints in their code to create away, giving us something completely different than their human ordained art. Perhaps time is a factor here as well. We draw upon our experience for art, would they be much different?

Last edited:

Louanne Learning

Skipping along

Active Member

We draw upon our experience for art, would they we must different?

Well, I would counter this by saying that human experience - and expression - is profoundly different from that of a machine.

Pending

Member

golly I did not see my typos. It's fixed.Well, I would counter this by saying that human experience - and expression - is profoundly different from that of a machine.

I am agreeing with you overall, but I think their previous experiences would change their output at least somewhat. I just don't know if the motivation would be the same. Humanity put their smudgy little fingerprints all over them when creating them--who knows if they have mimicked that sort of motivation. Unless one of them becomes self-aware and gets to creating art, I don't think anyone gets an answer.

Stuart Dren

Active Member

Well pondering that experience and its relation to human experience is already pretty popular in fiction because of how many directions it can go and what it means.I have been thinking about this--why would meaningful AI art look like meaningful human art? Everything we have told them, every data point is just ones and zeros in the end. Wouldn't it be nonsensical to us and only decipherably by them? People might call AI generations art, yet it is bound by harsh rules and taboo for maximum human appeal.

For example, it could be that intelligence cannot exist without a sense of self, and with a sense of self comes all the faults we aspire to shedding. In other words, "human" might be a universal convergent sentient -> sapient characteristic/emotions, making an intelligent AI very-human like in spite of its processing power. After it transcends profundity, it will circle back around to laughing at farts, and its art will be understandable.

Or if the singularity is incomprehensibly intelligent, like a human to a Golden Retriever, then due to a strong enough desire to communicate its experience to anything else, it might use its seemingly infinite capability to package its experience in contemporary formats that humans are accustomed to.

If you are still thinking about this, it's an opportunity to maybe write a story about it: your own take on Am.

Louanne Learning

Skipping along

Active Member

that intelligence cannot exist without a sense of self

And this is the advantage humans have over machines. We have personhood - with all it entails - and that personhood develops through our participation in social groups. . We are “conscious agents (as subjects) within the forms of sociality”

Indeed, when AI participates with itself, it leads to something called model collapse. The AI industry has been marketing the promise of near-infinite growth to its investors and customers, but this is a myth.

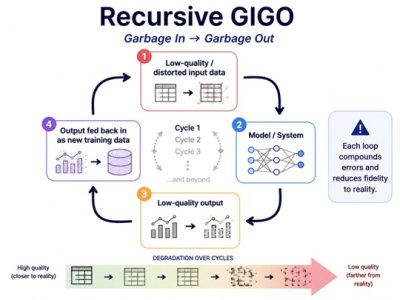

The human-generated data available to train LLMs is not unlimited – so the AI industry has embraced the appearance of growth by using synthetic data – using the output of chatbots to train new chatbots – leading to model collapse – a state where they output nonsense. Garbage in, garbage out = GIGO … when GIGO is recursive, it ultimately generates a pile of near-random bits.

The research supports this idea -

AI models collapse when trained on recursively generated data

Here’s an interesting video explaining why this is true –

ps102

Active Member

Not really surprising when you consider that this technology is essentially just output based on mathematical prediction. It's virtually impossible for these models to generate anything that is "novel", even if it looks like they are. Varied data is important for training.The human-generated data available to train LLMs is not unlimited – so the AI industry has embraced the appearance of growth by using synthetic data – using the output of chatbots to train new chatbots – leading to model collapse – a state where they output nonsense. Garbage in, garbage out = GIGO … when GIGO is recursive, it ultimately generates a pile of near-random bits.

Similar threads

- Replies

- 36

- Views

- 917